Introduction

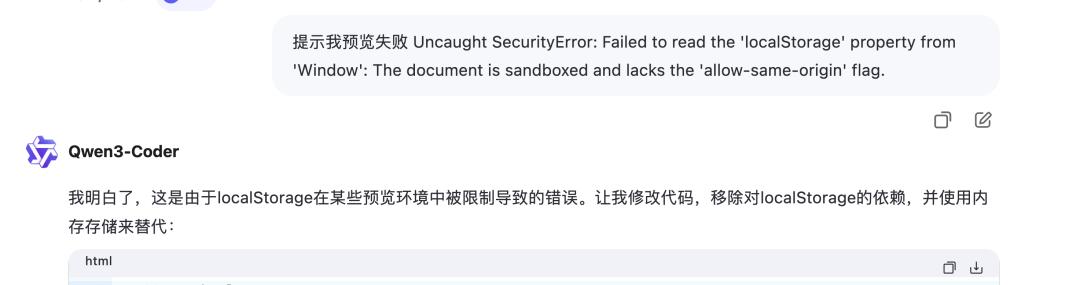

If you are familiar with Vibe Coding products, you might recognize their role as a “co-pilot”. They help monitor your progress during long coding sessions, assist in completing lines of code, or even generate specific functions while you take a break.

However, for a long time, these products have primarily acted as “co-pilots”, responding passively to user commands without understanding the underlying intentions or goals of the developer.

But what if AI could transcend this role? What if it could comprehend your navigation intent, anticipate upcoming challenges, and independently plan and execute tasks after you provide a destination? This would truly enable it to become a “full-stack engineer”.

Today, I deeply experienced Alibaba’s newly open-sourced Qwen3-Coder, which the company claims is currently at the state-of-the-art (SOTA) level for coding capabilities among open-source models.

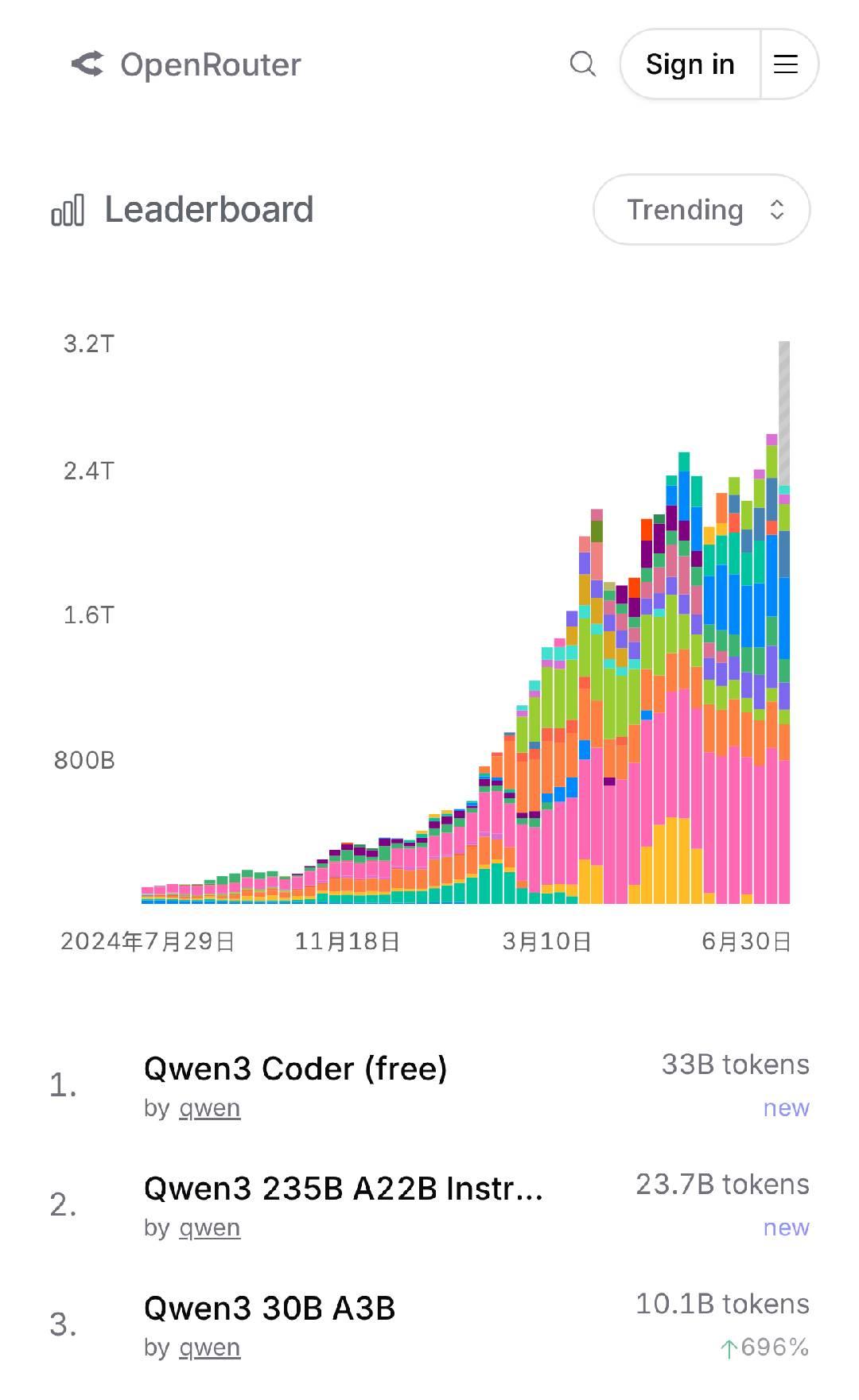

According to data from OpenRouter, a well-known API aggregation platform, the API call volume for Qwen has surged, surpassing 100 billion tokens in just a few days, ranking it among the top three globally on OpenRouter’s trend chart, making it the hottest model at present.

According to data from OpenRouter, a well-known API aggregation platform, the API call volume for Qwen has surged, surpassing 100 billion tokens in just a few days, ranking it among the top three globally on OpenRouter’s trend chart, making it the hottest model at present.

This week, Alibaba has open-sourced three significant models, including Qwen3-Coder, which have won global open-source championships in foundational, programming, and reasoning models. The Qwen 3 reasoning model has shown capabilities in creative writing, mathematics, and multilingual concepts that rival top closed-source models like Gemini-2.5 pro and o4-mini, achieving the best performance among open-source models.

This week, Alibaba has open-sourced three significant models, including Qwen3-Coder, which have won global open-source championships in foundational, programming, and reasoning models. The Qwen 3 reasoning model has shown capabilities in creative writing, mathematics, and multilingual concepts that rival top closed-source models like Gemini-2.5 pro and o4-mini, achieving the best performance among open-source models.

To be honest, even though Qwen3-Coder has been hailed as the “best programming model in the world” and has topped the HuggingFace model leaderboard, I approached it with cautious optimism, expecting yet another domestic model.

However, after a day of testing and deep interaction, this new model, claiming to reach SOTA levels, truly provided me with a different experience regarding Vibe Coding.

A Programming Model That Creates Digital Spaces

My first experience with Qwen3-Coder began with a series of challenging tests that I previously found difficult or impossible to complete.

I decided to test it with a classic “AI design taste test”. I input a somewhat audacious command:

“Create a homepage for Geek Park as a tech news media site, featuring a modern navigation bar, eye-catching colors, a concise company introduction, a clear content section, and a complete footer.”

In my experiences with Grok, ChatGPT, and similar products, such requests often resulted in a disaster reminiscent of 1990s aesthetics: chaotic layouts and glaring color schemes, akin to a public execution of modern design aesthetics.

Honestly, before the formal results were returned, I was mentally prepared to face a chaotic skeleton filled with tags that I would need to reconstruct from scratch.

However, when the code was generated and rendered in the preview, I was presented with a complete page that featured a highly unified design language, responsive layout, and even interface animations.

Homepage generated by Qwen3-Coder | Image source: Geek Park

Homepage generated by Qwen3-Coder | Image source: Geek Park

If the initial amazement was purely visual, the subsequent tests began to touch on its deeper “soul”.

I posed a more abstract challenge:

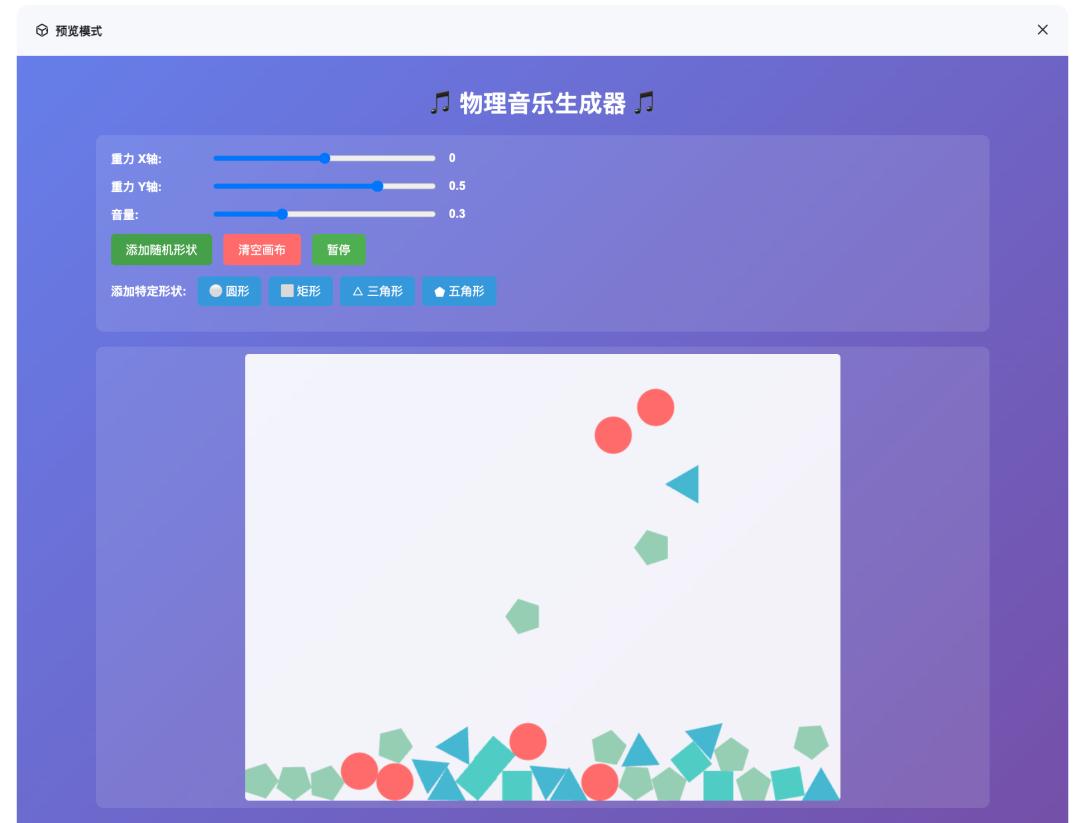

“Create a physics engine-based music generator using Matter.js, allowing different shaped objects to fall freely on the canvas. When they collide, they should produce different musical notes based on their shapes, and I need a ‘gravity controller’ to change their falling trajectories in real-time.”

The difficulty of this task lies in the requirement for AI to not only understand the code but also the world behind it.

Code is rational, but the rhythm of physics and the harmony of music carry a touch of emotional warmth. Qwen3-Coder once again exceeded my expectations. It implemented all the functionalities—you could see balls and squares falling on the canvas, with each collision producing harmonious sounds.

When you drag the gravity controller, the trajectories of all objects change, transforming a soothing melody into a frantic one, playing a chaotic symphony on your screen. It not only completed the functionality but also brought an unexpected aesthetic beauty.

When you drag the gravity controller, the trajectories of all objects change, transforming a soothing melody into a frantic one, playing a chaotic symphony on your screen. It not only completed the functionality but also brought an unexpected aesthetic beauty.

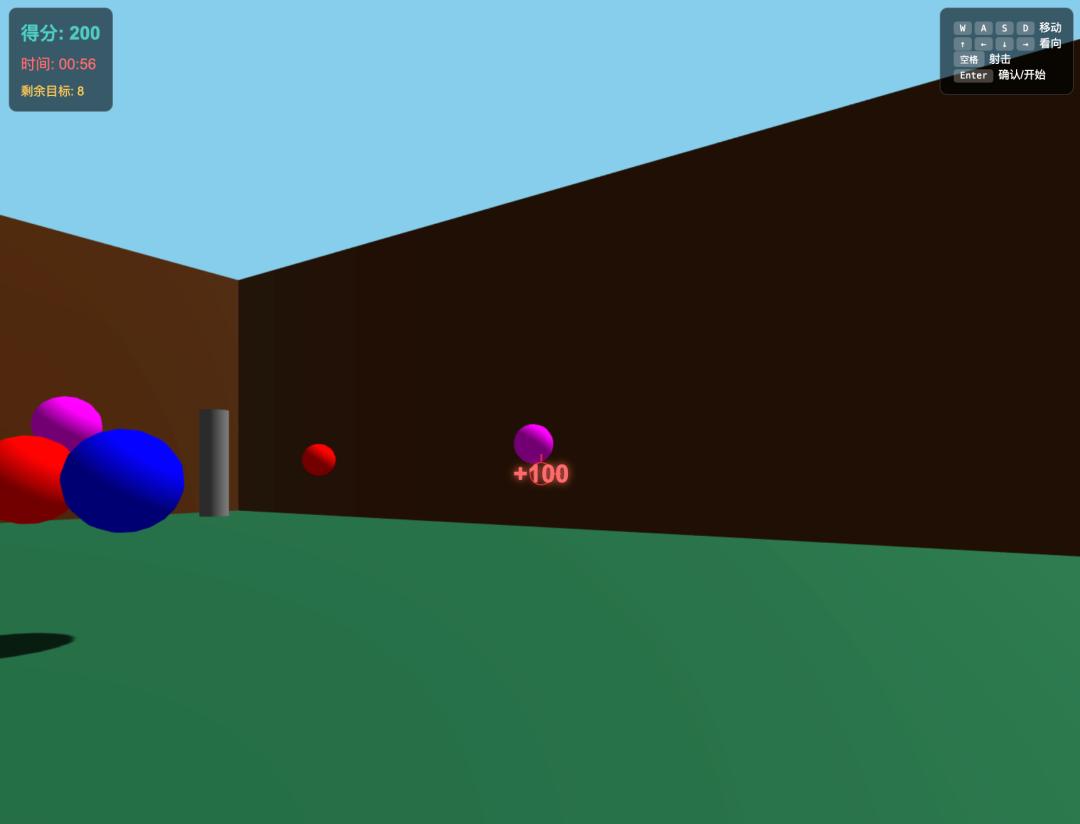

To further explore its boundaries, I threw out a game generation challenge, asking it to create a fully keyboard-controlled 3D shooting game with multiple interactive objects, a simple “storyline”, and an “Easter egg” that would allow quick completion if discovered in the code.

From the generated results, Qwen3-Coder returned calculations for target gravitational acceleration, collision detection algorithms, and the most surprising part—creating a 3D sandbox world while accurately implementing vector projection and distance detection algorithms within this small game.

From the generated results, Qwen3-Coder returned calculations for target gravitational acceleration, collision detection algorithms, and the most surprising part—creating a 3D sandbox world while accurately implementing vector projection and distance detection algorithms within this small game.

In terms of physics simulation, it could easily reproduce the classic bouncing ball game as well.

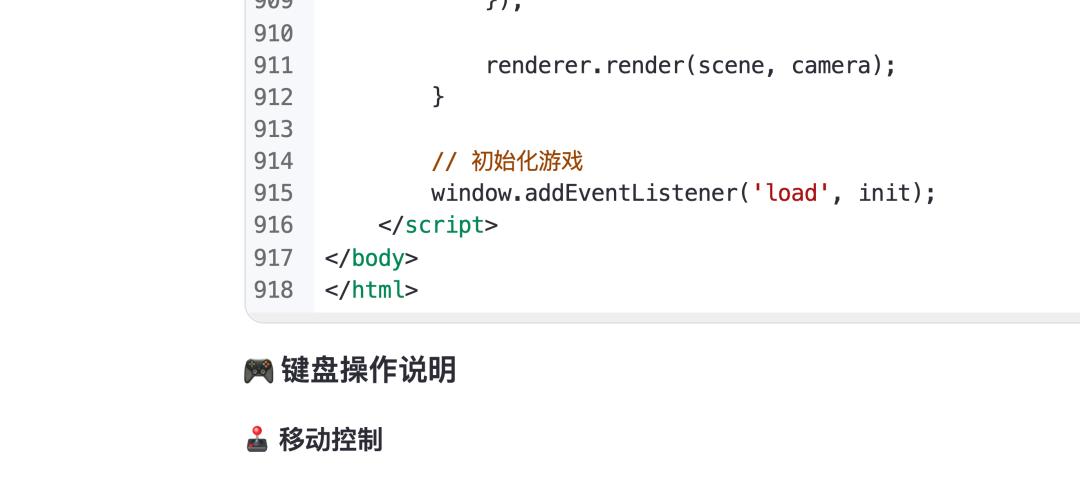

In addition to these practical examples, there was another dimension of experience during the tests that deserves special mention: its generation speed and contextual memory for lengthy tasks.

In addition to these practical examples, there was another dimension of experience during the tests that deserves special mention: its generation speed and contextual memory for lengthy tasks.

In my actual testing, over ten different development use cases were resolved in almost 1-3 minutes.

Over 900 lines of code generated in just three minutes, significantly accelerating the iteration speed of code | Image source: Geek Park

Over 900 lines of code generated in just three minutes, significantly accelerating the iteration speed of code | Image source: Geek Park

This efficiency brings a more fluid creative flow compared to previous code generation models, allowing developers to quickly translate ideas into reality. I could swiftly adjust and iterate code versions based on the generated results without interrupting my thoughts during long waits.

Currently, everyone in the industry is discussing “Vibe Coding”. Vibe is undoubtedly the future of human-computer interaction, relating to intuition and inspiration. However, we should also recognize that the solid and reliable “Coding” skills underpinning all smooth “Vibe” experiences are essential.

Currently, everyone in the industry is discussing “Vibe Coding”. Vibe is undoubtedly the future of human-computer interaction, relating to intuition and inspiration. However, we should also recognize that the solid and reliable “Coding” skills underpinning all smooth “Vibe” experiences are essential.

How a World-Class Programming Model is Forged

Qwen3-Coder’s evolution from a “code completer” to an “autonomous developer” primarily stems from its architectural choice—the efficiency and scale brought by the Mixture of Experts (MoE) model.

Traditional large models resemble a knowledgeable but generalist professor; while they understand many things, they still expend considerable effort when addressing specific professional issues. In contrast, Qwen3-Coder’s “super-sized” version acts like a think tank with a vast knowledge base of 480 billion parameters, internally divided into numerous highly specialized “domain experts”.

When you pose a question, the system does not engage the entire model data; instead, it activates a relevant “expert group” of 35 billion parameters to respond. This design allows it to maintain a vast knowledge capacity and capability ceiling while keeping the computational cost of each inference within a reasonable range. This is a delicate balance between model capability and inference efficiency, which is key to its ability to handle complex problems.

Additionally, the Alibaba Qwen team believes that programming tasks are inherently suitable for execution-driven reinforcement learning, as the correctness of code can be directly validated through the actual running results, the most objective standard. Based on this, they built a large-scale reinforcement learning infrastructure capable of running 20,000 independent environments in parallel.

You can think of it as a software company with 20,000 “digital interns”. Here, the model can massively simulate real software engineering processes: receiving a vague task, autonomously planning and breaking it down, then calling external tools (like code executors and testing frameworks) to attempt solutions and learn from the feedback (success, failure, or specific error messages), iterating and self-correcting based on that feedback.

It is through this massive trial-and-error learning in a large-scale, high-concurrency real coding environment that Qwen3-Coder successfully learned how to solve “long-distance” tasks requiring autonomous planning and tool invocation, significantly improving its code execution success rate and tool usage efficiency.

Lastly, the key aspect that makes my experience with Qwen3-Coder different from previous code generation models is its “repository-level” context length for handling large codebases.

The complexity of software engineering often arises from the understanding of vast codebases. Qwen3-Coder possesses a physical-level absolute advantage in this regard: it natively supports a context window of 256K tokens. What does this mean? It means the model can process millions of characters of code and documentation in a single interaction.

If the MoE architecture provides the model with the potential for intelligence, reinforcement learning gives it the skills to solve problems, then the ultra-long context window provides the stage and materials for it to showcase its talents. Without a global view of the entire system, even the smartest model is merely a calculator with a limited perspective. It is precisely this capability that allows Qwen3-Coder to elevate the nature of tasks from “generating a valid code snippet” to “executing an effective operation on a complex software system”.

This ability to handle “repository-level” code is a prerequisite for solving complex system-level issues, performing large-scale code refactoring, and deeply understanding legacy systems, something many models with smaller context windows cannot achieve.

On the authoritative SWE-Bench leaderboard for measuring code models’ ability to solve real-world software problems, Qwen3-Coder has clearly surpassed one of OpenAI’s strongest closed-source models, GPT-4.1. This indicates that this open-source model from China demonstrates stronger efficacy in handling complex, real programming tasks.

In the realm of Agentic Coding, which focuses on agent capabilities, Qwen3-Coder can stand shoulder to shoulder with the benchmark Claude 4.

Currently, if you want to get started with Qwen3-Coder, the most direct way is to visit chat.qwen.ai, where you can switch models with a single click in the upper right corner.

If you seek the ultimate “intention-first” coding experience or are already a Vibe Coding veteran, you can try the “super-sized” version via API in various CLI environments, using Qwen3-Coder-480B-A35B-Instruct.

If you seek the ultimate “intention-first” coding experience or are already a Vibe Coding veteran, you can try the “super-sized” version via API in various CLI environments, using Qwen3-Coder-480B-A35B-Instruct.

This is a MoE model with 480B parameters activating 35B parameters, natively supporting a 256K token context and extensible to 1M tokens via YaRN. Simply register an account on Alibaba Cloud, complete a simple verification, and you can create your API-Key to call this model.

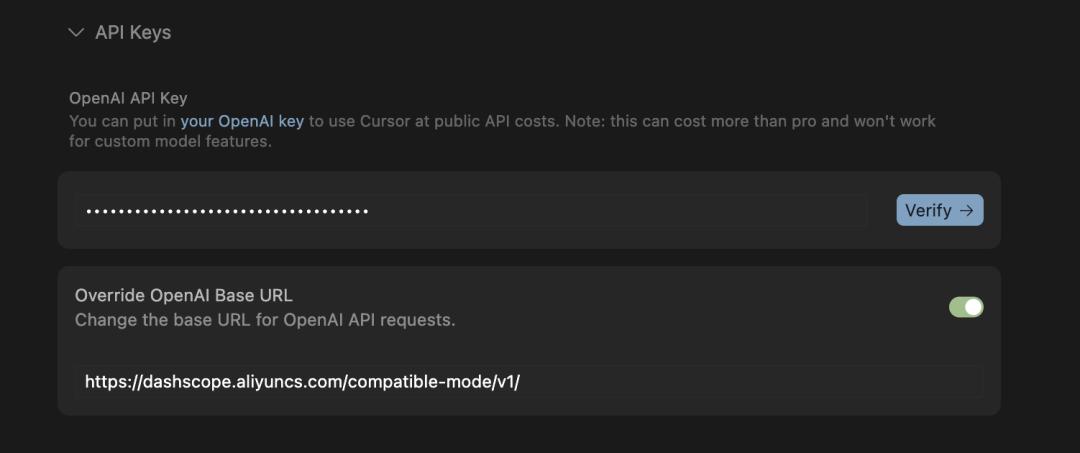

Thanks to its perfect compatibility with OpenAI API formats, you can seamlessly integrate this API-Key into your familiar chat or coding tools, whether it’s Cursor, Trae, CodeBuddy, or Cline.

Thanks to its perfect compatibility with OpenAI API formats, you can seamlessly integrate this API-Key into your familiar chat or coding tools, whether it’s Cursor, Trae, CodeBuddy, or Cline.

For users prioritizing data sovereignty and privacy, Qwen3-Coder offers the most comprehensive solution—local deployment.

For users prioritizing data sovereignty and privacy, Qwen3-Coder offers the most comprehensive solution—local deployment.

You can directly download the complete model files from Hugging Face or domestic platforms. This means you can run this currently strongest open-source programming tool completely privately on your own servers.

The Global Significance of a Local Choice

In conclusion, the emergence of Qwen3-Coder is not about replacing anyone but empowering everyone. It compresses the comprehensive capabilities of a seasoned development team into a tool that anyone can access.

For a long time, when discussing top coding models, domestic developers seemed to have limited choices. This reflects a key fact: in the field of natural language processing, the accumulation of Chinese corpora provides domestic models with a “home advantage”; however, in programming, code is a universal language. Whether it’s Python, Java, or JavaScript, the syntax and logic are unified globally.

This means that the competition for coding capabilities takes place on a completely fair global stage. In this arena, there are no language barriers, only raw technical strength.

Qwen3-Coder’s leading position on international benchmarks like SWE-Bench signifies much more than topping a Chinese leaderboard. It marks that China’s self-developed AI models have the technical strength to compete in the most cutting-edge and fiercely competitive fields globally.

If open-source is an attitude, the current capabilities exhibited by Qwen3-Coder suggest a strong commitment from Alibaba.

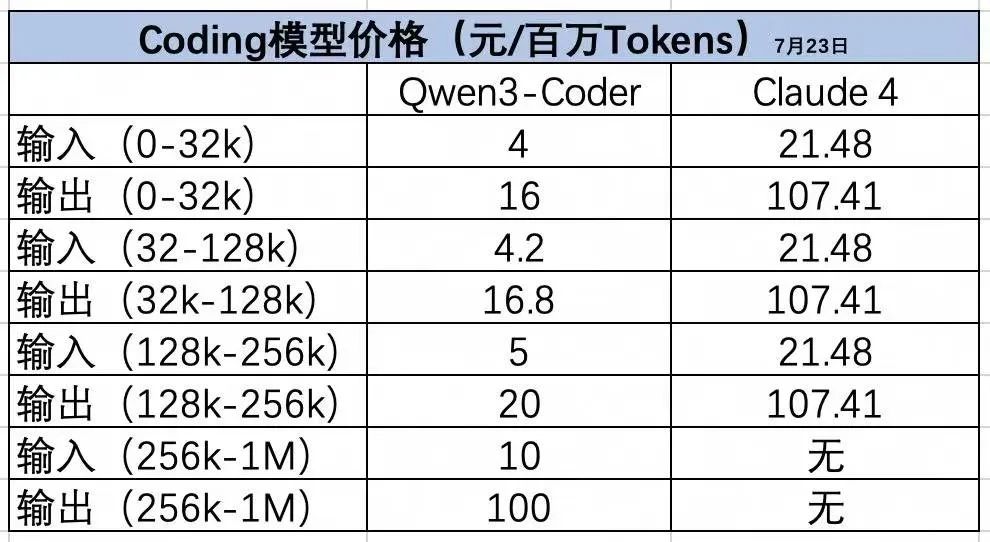

In terms of pricing, Alibaba has chosen to open-source it for free, and the API call costs are significantly lower than those of comparable overseas models.

More importantly, this is an open-source model from China—this alone means that Chinese users can call it anytime and stably, free from concerns about network conditions, supply restrictions, and access speeds.

It may not be the only option, but it is heartening to see that in the race for coding large models, domestic developers have finally welcomed a reliable, friendly, and sufficiently effective local contender.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.